Radiology

Do we need more medical imaging?

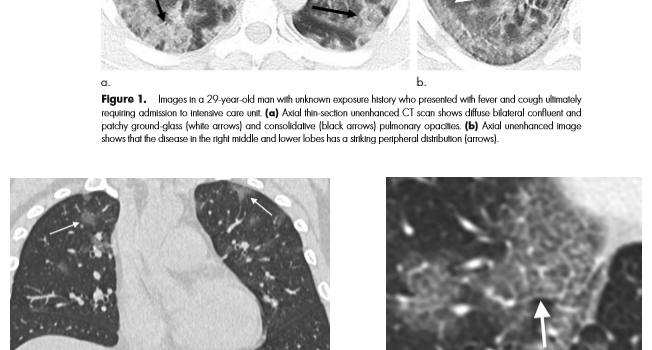

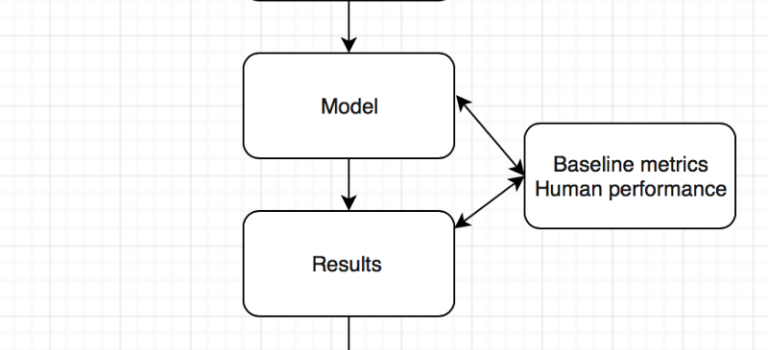

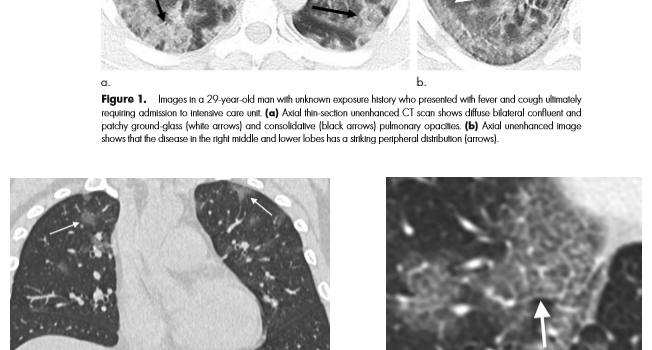

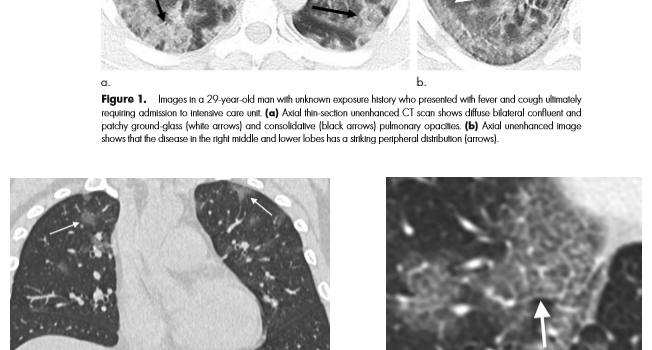

Are computers better than doctors ? Will the computer see you now ? What we learnt from the ChexNet paper for pneumonia diagnosis …

OODA loop revisited – medical errors, heuristics, and AI.

The healthcare blog of Stephen M. Borstelmann MD